The Rise of NeRFs And AI in VFX

AI is reshaping the VFX industry, paving the way for unprecedented advancements in creating realistic 3D models and revolutionising workflows. In this comprehensive blog, we delve into Nvidia Neural Radiance Fields (NeRFs) and their potential to transform the way we approach VFX. Discover the differences between photogrammetry and NeRFs, explore AI-generated textures, and learn about AI solutions for animating and compositing CG characters. Join us as we navigate the future of VFX and stay at the forefront of technological advancements.

The Power of NeRFs: Redefining 3D Modelling in VFX

Neural Radiance Fields (NeRFs) have the power to adapt the VFX industry significantly, particularly in the realm of AI VFX and 3D modelling. With NeRFs gaining prominence, the industry is witnessing a groundbreaking shift in how realistic 3D models are created. By harnessing the power of Luma AI and Nvidia Neural Radiance Fields, VFX artists can generate incredibly detailed and lifelike 3D representations. These NeRFs VFX offer an alternative on traditional modeling techniques and open up new possibilities for photorealistic visual effects.

NeRFs excel in handling scenes with complicated geometry and appearance, thanks to their effective optimisation and rendering capabilities. By optimising neural radiance fields, VFX artists can render novel views of scenes that exhibit realistic lighting and intricate details. The results achieved by NeRFs outperform prior works on neural rendering and view synthesis, elevating the quality and realism of VFX productions.

The integration of NeRFs with other AI techniques and software such as Luma AI, allow for complete AI-generated textures and dynamic simulations, further enhancing the versatility and potential of this technology. With the continuous advancements in NeRFs and their applications in VFX, the future holds promising opportunities for pushing the boundaries of realism and creativity in 3D modelling and visual effects.

San Gimignano | Stylised NeRF

Technical Computing Process of NeRFs

NeRFs have been optimised by researchers (and special mention) Ben Mildenhall, Pratul P. Srinivasan, Matthew Tancik, Jonathan T. Barron, Ravi Ramamoorthi and Ren Ng. Their White paper is referenced at the bottom of the page.

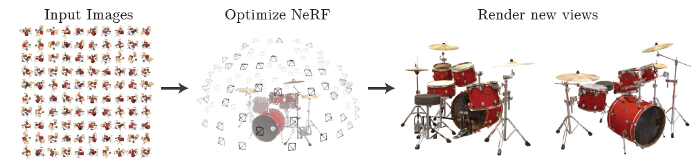

They have found the means to generate detailed 3D models from 2D images, leveraging neural networks and deep learning techniques. The underlying concept of NeRFs revolves around the relationship between images and their corresponding 3D geometry.

The computing process of NeRFs involves training a neural network to model the volumetric representation of a scene. This process requires a significant amount of data, including images and corresponding 3D geometry. The neural network learns to reconstruct the 3D structure and appearance of the scene by optimising its parameters. The optimisation aims to minimise the difference between the rendered views and the input images, gradually improving the accuracy and fidelity of the NeRFs model.

Previous volumetric techniques for creating new views of scenes have limitations when it comes to handling higher-resolution images because they require a lot of computational resources. they have addressed this issue by using a deep neural network to represent the scene as a continuous volume. This approach not only produces better-quality renderings compared to previous methods but also requires much less storage space.

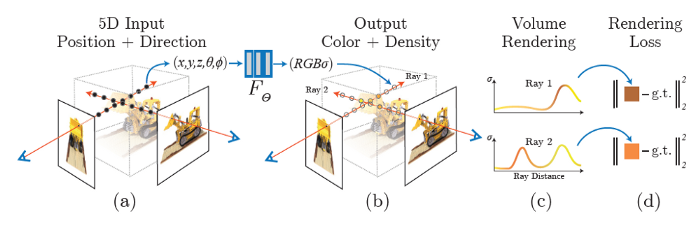

Neural Radiance Field Scene Representation – Image Source: Matthew Tancik

NeRFs use ray marching as a key technique to generate the volumetric representation of a scene. By casting rays from the camera through the scene and integrating the radiance along the ray, NeRFs calculate the colour and density at each point.

This network takes a single continuous 5D coordinate, which includes the spatial location (x, y, z) and viewing direction (θ, φ), as input. It then outputs the volume density and view-dependent emitted radiance at that specific spatial location. This representation allows NeRFs to reconstruct the 3D geometry and appearance of the scene with remarkable accuracy also accounting for occlusion, lighting variations, and complex material properties, resulting in highly detailed and realistic NeRFs models.

The continuous advancements in the computing process of NeRFs contribute to the ever-increasing potential and application of this technology in VFX. The ability to generate realistic lighting, intricate details, and accurate 3D representations opens up new possibilities for creating captivating visual effects and pushing the boundaries of realism in VFX productions.

Goodwood House | Stylised NeRF

Luma AI: Simplifying NeRFs

The contribution of Luma AI and their Nvidia NeRF plugin (which supports running designs on Unreal Engine 5) has made the process of creating NeRFs more accessible and user-friendly. Luma AI’s tools and software provide intuitive interfaces and streamlined workflows, enabling VFX artists to harness the power of NeRFs without requiring extensive technical expertise. With Luma AI, even inexperienced users can achieve impressive results for a relatively low price. Currently however they limit the input to 5GB.

They leverage advanced AI algorithms to analyse the input data, generate the volumetric representation of the scene, and refine the NeRFs model for optimal results. By abstracting the technical complexities, Luma AI empowers artists to focus on their creative vision, resulting in stunning NeRF models.

Image Source: NeRF Paper (Mildenhall1, Srinivasan1, Tancik, et. al), 2020

The Future of NeRFs: Advancements and Possibilities

Scalability:

One area of future advancement lies in scalability. Efforts are being made to optimise NeRF algorithms to handle larger and more complex scenes. This scalability will allow VFX artists to create NeRFs models of entire environments, such as sprawling cityscapes or vast landscapes, with the same level of detail and realism as smaller-scale models.

Dynamic Scenes:

Ongoing research focuses on the integration of dynamic elements into NeRFs. Currently, NeRFs predominantly represent static scenes. However, researchers are exploring techniques to incorporate dynamic objects and animations into NeRFs, enabling the creation of interactive and evolving scenes. This would open up new possibilities for creating dynamic visual effects and interactive narratives.

AI Integration:

Another area of exploration is the integration of NeRFs with AI-driven simulation techniques. By combining NeRFs with AI algorithms for simulating phenomena such as realistic lighting effects, fluid dynamics, and other physical interactions, VFX artists can enhance the visual fidelity and believability of NeRFs models. This integration holds promise for creating even more immersive and realistic virtual environments.

New NeRF Models

The initial NeRF model had certain drawbacks, such as slow training and rendering speeds, and its applicability was limited to static scenes only. Furthermore, it lacked flexibility as a NeRF model trained on one scene couldn’t be effectively utilised for other scenes.

To overcome these limitations, researchers have made notable advancements by developing various methods that build upon the NeRF framework. These models aim to address the aforementioned issues and enhance the overall capabilities of NeRF for a wider range of scenes and improved performance.

This video by Corridor Crew below gives a fun overview of NeRFs and their potential.

Visualskies Commitment to NeRFs and AI-Driven VFX

The entirety of our team at Visualskies are always lookig out for new advancements in AI-driven VFX innovations, constantly seeking ways to push the boundaries of creativity and deliver exceptional visual experiences that no-one else can offer. As advancements in AI technologies, and tools such as NeRFs, continue to reshape the VFX industry, we are dedicated to embracing these innovations and integrating them into our workflows as and when they are at full optimisation.

We definitely plan to implement NeRFs in a stylised way for the time being as we don’t believe it is quite ready for the High-Resolution you can expect from our other 3d scanning services. We are excited to explore it’s remarkable ability to capture reflections and shiny surfaces with ease, something that all 3D capture specialist would know to be very difficult.

As a forward-thinking company, Visualskies will provided the best scanning solution for a clients needs. So don’t hesitate to contact us if you want to implement these new technologies into your workflow.

Our Berlin Office In Restoration | Stylised NeRF

Innovations in VFX Workflows: AI Solutions and AI-Generated Textures

AI-driven innovations are redefining workflows and elevating visual realism. Wonder Dynamics’ groundbreaking AI solutions automate labour-intensive tasks, specifically in character animation, lighting, and compositing within live-action scenes. On the other hand, Withpoly’s AI-generated textures push the boundaries of visual appeal, adding depth and detail to 3D models with seamless integration into the production pipeline.

These advancements optimise workflows, reduce production time, and deliver exceptional visual fidelity. By embracing AI in VFX, studios will lead the industry into a new era delivering extraordinary visuals and captivating experiences with relative ease compared to past methods. As AI progresses we will see the rapid implementation of these techniques in the biggest shows.

Visualskies actively explores these AI-driven advancements, harnessing their power to propel the future of creativity in VFX. Join us on this exciting journey as we shape the evolution of VFX through the transformative capabilities of AI.

Research Paper Referenced in The Article

@inproceedings- mildenhall2020nerf.

TITLE – NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis}.

AUTHORS – Ben Mildenhall, Pratul P. Srinivasan, Matthew Tancik, Jonathan T. Barron, Ravi Ramamoorthi and Ren Ng.

YEAR – 2020

BOOKTITLE – ECCV.

Filter

Behind The Scenes: 3D Scanning on Bridgerton and Why Equipment Always Matters

Visualising Possibility: How Visualskies Powers Art & Immersive Experiences

WHERE TO FIND US?

Visualskies is proud to offer our expert Photogrammetry services for VFX across multiple locations worldwide. Our presence in key cities enables us to provide prompt and efficient service to our clients. You can find us in the following locations.

NeRFs and AI London

5 Havelock Terrace

Battersea

London

SW8 4AS

United Kingdom